The idea of random forests is to randomly select \(m\) out of \(p\) predictors as candidate variables for each split in each tree. Therefore Random Forest is preferred over bagging when it comes to using ensemble technique on Decision Trees. Random forest is an extension of Bagging, but it makes significant improvement in terms of prediction. Thus facilitating better learning and more accurate predictions. Random forest checks this tendency by randomly selecting a subset of columns, therefore P 5 is present in only few subsets and during aggregation the polarity caused by it is averaged out. More specifically, while growing a decision tree during the bagging process, random forests perform split-variable randomization where each time a split is to.

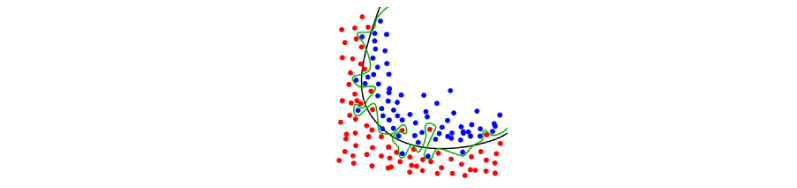

There is very simple and powerful concept behind RFthe wisdom of crowd. Random forest consists of many trees, and each tree predicts his own classification and the final decision makes by model based on maximum votes of trees (Fig. Thus leading to creation correlated models. Random forest classifier uses bagging techniques where decision tree classifier is used as base learner. Then this variable, in bagging approach, will be present in all the subsets, hence dominate the learning process in of all the models. Let me elaborate, say you have a dataset with p independent variable and out of them one independent variable, say P 5, is quite strong. Since the all the subsets in bagging share the same columns hence, the models created from them are also correlated. A random forest is a meta estimator that fits a number of decision tree classifiers on various sub-samples of the dataset and uses averaging to improve the predictive accuracy and control over-fitting. Thus making it an even more optimized approach of using Decision Trees for than bagging. Chanseok Kang 5 min read Python Datacamp MachineLearning. In bagging the subsets differ from original data only in terms of number of rows but in Random forest the subsets differ from the original data both in terms of number of rows as well as number of columns. A Summary of lecture 'Machine Learning with Tree-Based Models in Python. However, why then even Random Forests fail when it comes to modelling financial. In Random forest, we apply the same general bagging technique using Decision Trees as the weak learners, along with one extra modification. Secondly Random Forest works only with Decision Trees, Whereat in bagging any algorithm can be used. Bagging and Random Forest techniques have shown remarkable results on. Random Forest works similar to bagging except for the fact that not all features(independent variables) are selected in a subset.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed